In my previous blog post I described gVisor as 'some stuff I hardly can really understand'.

Technology is not only about code, understanding where it comes from, why and how it has been done, do helps to understand the design decision and actual goals.

tl;dr: gVisor has been open-sourced recently but it has been running Google App Engine and Google Cloud Functions for years. It is a security sandbox for application, acting as a "Virtual kernel", but not relying on an hypervisor (unlike KataContainers). Now being open-source we can expect gVisor to support more application runtimes and being portable enough so it can replace Docker's runc at some point for those interested in this additional isolation level.

Being in San Francisco for DockerCon'18 I went to visit Google office to meet Googler Ludovic Champenois, and Google Developer Advocate David Gageot, who kindly explained me gVisor history and design. In the meantime some of the informations required to fully understand gVisor became public, so I now can blog on this topic. By the way, Ludo made a presentation on this topic at BreizhCamp, even gVisor name has not been used this was all about it.

History

gVisor introduce itself as "sandboxing for linux applications". To fully understand this, we should ask "Where does it come from" ?

I assume you already heard about Google App Engine. GAE was launched 10 years ago, and allowed to run Python application (then later Java) on google infrastructure for the cost of actually consumed resources. No virtual machine to allocate. Nothing to pay when application is not in use. If they did this in 2018, they probably would have named something like "Google Serverless Engine".

Compared to other cloud hosting platform like Amazon, Google don't rely on virtual machines to isolate applications running on his infrastructure. They made this crazy bet they can provide enough security layers to directly run arbitrary user payload on a shared operating system.

A public cloud platform like Google Cloud is a privileged target for any hacker. in addition, GAE applications do run on the exact same Borg infrastructure as each and every Google services. So the need for security in depth, and Google did invest a lot in security. For sample, the hardware they use in DataCenters do include a dedicated security chip to prevent hardware/firmware backdoors.

When GAE for java was introduced in 2009, it came with some restrictions. This wasn't the exact same JVM you used to run, but some curated version of it, with some API missing. Cause for those restrictions is for google engineers to analyse each and every low level feature of the JRE that would require some dangerous privileges on their infrastructure. Typically, java.lang.Thread was a problem.

Java 7 support for GAE has been announced in 2013, 2 years after Java 7 was launched. Not because Google didn't wanted to support Java, nor because they're lazy, but because this one came with new internal feature invokedynamic. This one introduced a significant new attack surface and required a huge investment to implement adequate security barriers and protections.

Then came Java 8, with lambdas and many other internal changes. And plans for Java 9 with modules was a promise for yet more complicated and brain-burner challenges to support Java on GAE. So they looked for another solution, and here started internal project that became gVisor.

Status

gVisor code you can find on Google's github repository is the actual code running Google App Engine and Google Cloud Function (minus some Google specific pieces which are kept private and wouldn't make any sense outside Google infrastructure).

When Kubernetes was launched, it was introduced as a simplified (re)implementation of Google's Borg architecture, designed for lower payloads (Borg is running *all* Google infra as a huge cluster of hundreds thousands nodes). gVisor isn't such a "let's do something similar in oss" project, but a proven solution, at least for payloads supported by Google Cloud platform.

To better understand it's design and usage, we will need to get into details. Sorry if you get lost in following paragraph, if you don't care you can directly scroll down to the kitten.

What's a kernel by the way ?

"Linux containers", like the ones you use to run with Docker (actually runc, default low level container runtime), but also LXC, Rkt or just systemd (yes, systemd is a plain container runtime, just require a way longer command line to setup :P), all are based on Linux kernel features to filter system calls, applying visibility and usage restrictions on shared system resources (cpu, memory, i/o). They all delegate to kernel responsibility to do this right, which as you can guess is far from being trivial and is the result of a decade of development by kernel experts.

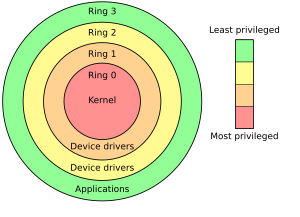

Linux defines a "user-space" (ring 3) and 'kernel-space" (ring 0) as CPU execution levels. "rings" are protection levels implemented by hardware: on can get into a higher ring (during boot), but not the opposite, and each ring only can access a subset of hardware operations.

Linux defines a "user-space" (ring 3) and 'kernel-space" (ring 0) as CPU execution levels. "rings" are protection levels implemented by hardware: on can get into a higher ring (during boot), but not the opposite, and each ring only can access a subset of hardware operations.

An application runs in user-space. Doing so there's many hardware related operation it can't use: for sample, allocating memory, which require interactions with hardware and is only available in kernel-space. To get some memory, application has to invoke a system call, a predefined procedure implemented by kernel. When application execute malloc, it actually delegates to kernel the related memory operation. Buy remember : there's no way to move from user-space to kernel-space, so this not just a function call.

System calls implementation depends on architecture. on intel architectures it relies on interruption, which is a signal the hardware uses to handle asynchronous tasks and external events, like timers, a key pressed on keyboard or incoming network packet. Software also can trigger some interruptions, and passing parameters to kernel relies on values set in CPU's registries.

When an interruption happens, the execution of the current program on the CPU is suspended, and a trap assigned to the interruption is executed in kernel-space. When the trap completes, the initial program is restored and follow up it's execution. As interruption only allows to pass few parameters, typically a system call number and some arguments, there's no risk for application to inject illegal code in kernel-space (as long as there's no bug in kernel implementation, typically a buffer overflow weakness).

When an interruption happens, the execution of the current program on the CPU is suspended, and a trap assigned to the interruption is executed in kernel-space. When the trap completes, the initial program is restored and follow up it's execution. As interruption only allows to pass few parameters, typically a system call number and some arguments, there's no risk for application to inject illegal code in kernel-space (as long as there's no bug in kernel implementation, typically a buffer overflow weakness).

Kernel trap handling the system call interruption will proceed to memory allocation. Doing so it can apply some for restrictions (so your application can't allocate more that xxx Mb as defined by control-group) and implement memory allocation on actual hardware.

What's wrong with that ? Nothing from a general point of view, this is a very efficient design, and system call mechanism acts as a very efficient filter ... as long as everything in kernel is done right. In real world software comes both with bugs and unexpected security design issues (not even considering hardware ones), so does the kernel. And as Linux kernel protections use by Linux Containers take place within kernel space, anything wrong here can be abused to break security barriers.

I you check number of CVE per year for linux kernel you will understand being a security engineer is a full time job. Not that linux kernel is badly designed, just that a complex software used by billions devices and responsible to manage shared resources with full isolation on a large set of architectures is ... dawn a complex beast !

I you check number of CVE per year for linux kernel you will understand being a security engineer is a full time job. Not that linux kernel is badly designed, just that a complex software used by billions devices and responsible to manage shared resources with full isolation on a large set of architectures is ... dawn a complex beast !

Congrats to Linux kernel maintainer by the way, they do an awesome job !

Google do have it's own army of kernel security engineers, maintaining a custom kernel : both on purpose for hardware optimisation and to enforce security by removing/replacing/strenghtening everything that may impact their infrastructure, also contributing to mainstream Linux kernel when it makes sense.

But that's still risky : if someone discover an exploit on linux kernel, he might not be smart enough to keep this private or could even try to hack Google.

Additional isolation : better to be safe than sorry.

A possible workaround to this risk is to add one additional layer of isolation / abstraction : hypervisor isolation.

To provide more abstraction, a Virtual Machine do rely on hardware capability (typically: intel VT-X) to offer yet another level of interruption based isolation. Let's see how malloc will operate when application runs inside a VM :

- application calls libC's malloc which actually invoke system call number 12 by triggering an interruption.

- interruption is trapped in kernel-space as configured on hardware during operating system early stage boot.

- kernel access hardware to actually allocate some physical memory if legitimate. On bare metal the process would end here, but we are running in a VT-X enabled virtual machine

- as guest kernel is virtualized, it actually run on hosts as a user-space program. VT-X make it possible to have two parallel ring levels. So attempt to access hardware do trigger VMEXIT and let hypervisor to execute trapping instructions to act accordingly. in KVM architecture this means switching into hosts' user-mode as soon as possible (!) and use user-mode QEmu for hardware emulation.

Hypervisor is configured to trap this interruption, and translating the low level hardware access into some actual physical memory allocation, based on emulated hardware and Virtual Machine configuration. So when VM's kernel things it's allocating memory block xyz on physical memory, it's actually asking hypervisor to allocate on an emulated memory model, and hypervisor can detect an illegal memory range usage. security++

Hypervisor is configured to trap this interruption, and translating the low level hardware access into some actual physical memory allocation, based on emulated hardware and Virtual Machine configuration. So when VM's kernel things it's allocating memory block xyz on physical memory, it's actually asking hypervisor to allocate on an emulated memory model, and hypervisor can detect an illegal memory range usage. security++

This second level of isolation would prevent a bug in virtual machine kernel to expose actual physical resources. It also ensure the resources management logic implemented by guest kernel is strictly limited to a set of higher-level allocated resources. Hacking both the kernel then the hypervisor is possible in theory, but extremely hard in practice.

KataContainers is an exact implementation of this idea : a docker image when ran by runV (KataContainers' alternative to Docker's runC) do use a KVM hypervisor to run a just-enough virtual machine so the container can start. And thanks to OpenContainerInitiative and docker's modular design you can switch from one to the other.

KataContainers is an exact implementation of this idea : a docker image when ran by runV (KataContainers' alternative to Docker's runC) do use a KVM hypervisor to run a just-enough virtual machine so the container can start. And thanks to OpenContainerInitiative and docker's modular design you can switch from one to the other.

Google's wish list for application isolation

Google decided to explore another approach. a Virtual Machine comes with some footprint. With a dedicated kernel and hardware emulation, a significant amount of cpu/memory is consumed by translation, and attempts by guest kernel to optimise resource usage are non-sense without a full platform vision and duplicate host's kernel effort. When you run Billions containers, any useless byte has a cost.

On the other side, kernel-based isolation is far from being enough. They are part of a global solution, but Google needs more. Goole wanted to :

- limit the kernel's attack surface : minimize lines of code involves, so potential bugs

- limit the kernel's risk for bugs : rely on a structured language. They selected Go (some advocate Rust would have been a better choice...)

- limit the impact of kernel being hacked

Virtual Kernel to the rescue.

Google designed a "User-Space Thin Virtual Kernel" (this is how I call this, not sure about their own name for this concept).

gVisor kernel is a tiny simple thing. It only implement a subset of Linux system calls (~250 over 400), and do this without any attempt to do some clever optimisations. This thin kernel is more or less a kernel firewall, and acts as a barrier to kernel exploits, for sample to prevent a buffer overflow.

buffer overflow is a security exploit relying on kernel to not detect some system call parameter do imply a larger amount of data to be written in some well known kernel-memory location. As a result the sibling kernel memory get overridden, and can allow hackers to execute some code in kernel mode. gVisor kernel is pretty simple in implementation, which drastically reduce the risk for such an attack to find. Linux Kernel in comparison is about thousands line of C code, with a significant attack surface whenever best experts review it's code on a regular basis.

Sounds crazy ? Look at this for a disruptive proof of abusing a credit card payment terminal being hacked by buffer overflow.

Sounds crazy ? Look at this for a disruptive proof of abusing a credit card payment terminal being hacked by buffer overflow.

gVisor kernel do trap application system calls and (re)implement them as a kernel proxy on host's, without any hardware emulation / hypervisor. Being implemented in Go, it doesn't suffer the permissive C model which force developer to check for buffers size, allocated pointers, reference removal, etc. This for sur comes with some cost (typically: a garbage collector), I bet Google isn't using the standard Go compiler/runtime for internal use.

gVisor do only implement legitimate system calls for payload supported on Google App Engine. Java 8 support for Google App Engine in 2017 means that all system calls a JRE 8 require have been implemented by gVisor. It probably could run many other runtimes, but Google prefer to double check before any public announcement and commitment with customers.

But the most disruptive architecture decision with gVisor is for it to run this Thin Virtual Kernel in user-space. Some magic has to happen so that user program system call get actually trapped by Virtual Kernel running in user-space.

But the most disruptive architecture decision with gVisor is for it to run this Thin Virtual Kernel in user-space. Some magic has to happen so that user program system call get actually trapped by Virtual Kernel running in user-space.

gVisor comes with a plugable platforms, offering two options : ptrace and kvm.

ptrace is documented as "reference" implementation on gVisor docs. One should read "portable", as sole guaranteed way to run gVisor on arbitrary Linux systems. ptrace is Linux system call debugger, it's designed to trap system calls in kernel-space and execute a user-space fonction in reaction.

Sounds good but devils is in the details: the actual design has some communication glitches which make it pretty inefficient when accessing large amounts of memory. Not an issue for a debugger, but a huge one for a container runtime. User Mode Linux was designed with this exact same idea, ans is mostly abandoned for bad performances.

ptrace is documented as "reference" implementation on gVisor docs. One should read "portable", as sole guaranteed way to run gVisor on arbitrary Linux systems. ptrace is Linux system call debugger, it's designed to trap system calls in kernel-space and execute a user-space fonction in reaction.

Sounds good but devils is in the details: the actual design has some communication glitches which make it pretty inefficient when accessing large amounts of memory. Not an issue for a debugger, but a huge one for a container runtime. User Mode Linux was designed with this exact same idea, ans is mostly abandoned for bad performances.

The other option is kvm, so ... an hypervisor. This is claimed to be experimental, my guess is that google custom flavour of kvm and Linux kernel has been optimised for this usage.

Who the hell will use gVisor ?

Anyone running Google App Engine or Google Cloud Functions for sure, but by design of the platform they don't know, and they don't have to care.

For others, without a portable, production ready platform, gVisor so far is "only" an interesting project, which tell us more about how Google do host random code on a shared infrastructure. If one want to run containers with kvm isolation, it's pretty unclear to me if gVisor is a better option vs KataContainer, as this one is public for a longer time with a larger community. On the other side, gVisor project already received request features and pull-requests to add more system-calls. Maybe this can help Google expand its Cloud platform to new application runtimes ?

The other option is for another platform to be implemented. Typically, Google new operating system Fuchsia, designed to run both on mobiles, IoT devices and clusters might be designed with this use-case in mind, offering an efficient syscall-to-userspace mechanism (or maybe using more ring levels ?).

Last but not least, gVisor project demonstrates creativity in an alternative approach. Someone might come with some fresh new idea using this new piece of software in combination with another feature, and build something unexpected... this happened already as Linux kernel had all those namespace and cgroup things, and some technology enthusiasts came with this emergent concept of "_containers_", creating a whole ecosystem and changing the way we build and deliver software today.

x

112 commentaires:

Is any information about kvm optimization to support gVisor? I have tested the performance of gVisor, and the performance is not very good when using normal kvm module.

The technologies in this era have developed a lot with the advancement of such technologies in every part of our daily life, no matter what the work is about. We have the technologies to make things easier than ever. Such as the Internet provides professional dissertation writing service for students & it is an example of the use of technologies.

Yes, it is correct. The expansion of such technologies in every aspect of our everyday lives, regardless of the work, has resulted in significant advancements in technology in our era. We now have the technology to make things easier than ever before. If we need to do any task, we must use technology.

source:https://webdesignbolt.com/services/logo-design

tadora force (Tadalafil), a PDE5 inhibitor, is available in Tablet form to treat erectile dysfunction. The FDA has approved Tadalafil. Tadora (Tadalafil), a medication that treats Erectile Dysfunction, is available. Besides Tadarise 20, we also have Tadora force , which can be used for the same indications.Tadora force can be used to treat male sexual issues (impotence, Ed). Tadalafil, when combined with intimate stimulation.

Super Vilitra contains the dynamic fixing agent Vardenafil. The conventional Vardenafil is one of the most potent and efficient erectile dysfunction treatment and is effective for almost every male. It is a fast performance and can last at least half as long as Sildenafil. Vardenafil is more expensive to manufacture than sildenafil tablets, but it is backed by impressive results. Super Vilitra which is otherwise known as Generic Vardenafil.

It was very useful for me. Keep sharing such ideas in the future as well. This was actually what I was looking for, and I am glad to come here! Thanks for sharing such information with us.

epoxy repair

Thank you for this awesome post! It was really helpful :)

hi

The new device, known as the GVisor, is a new wearable display that displays video in front of the user’s eyes. This allows the user to watch a video and interact with it in real-time. The device is powered by Intel, and will be available in a variety of colors. This device also help you in getting good assignment help ukservice easily by searching on the web , this is the best device I ever seen .

The match will take place on November 23rd at Qatar’s Khalifa International Stadium. Whoever wins advances to the round of 16. If you want to know how to watch Germany vs Japan Live Stream from anywhere in the world, keep reading!

All the content you post is great and very helpful dissertation writing service

One of the many advantages among all is that you can manage your time and with limited efforts, you can grab the maximum outcomes in the form of high scores. It went outstanding for me when I got cheap master dissertation proposal centered professional service providers. It always helps students in best way and make them able to fulfill their ultimate desire, which is mainly excellence in academics.

Fantastic article. Hatta is a lovely place located near Dubai. If you ever visit Dubai, don't forget to enjoy the Hatta tour Dubai as it is the place that is worth a visit.

I am a little confused! Please allow me to ask some silly questions. Do we need Docker to run gVisor? professional dissertation help uk

gVisor is an impressive tool for providing an additional layer of security for container-based applications. It looks like a great solution for those looking for dissertation writing service an extra layer of security for their applications and data.

One of the numerous benefits is that you may organise your time so that, with a minimum of work, you can achieve the best results in the form of high scores. My experience with the affordable dissertation writing service focused expert service providers was excellent and also get buy dissertation online. It always supports students in the greatest way and enables them to achieve their ultimate goal, which is primarily academic achievement.

Finally, including photographs and videos of the Dubai Desert safari is important. Incorporating visuals can help bring the story to life and make readers feel like they are part of the experience.

Good information and wish to see much more like this...thanks for sharing an information...

average cost of uncontested divorce in virginia

cost of uncontested divorce in virginia

blog is informative for Google had recently enhanced its set of webmasters tools allowing site.if you want another blog visit San Francisco Graphics

Seccomp is a Linux kernel feature that enables applications to restrict their system calls. By using seccomp, applications can be prevented from making potentially dangerous system calls that could compromise the system's security. Buy Essay Online UK

After reading this article on "gVisor in depth", I gained a better understanding of the technical details behind gVisor's container isolation capabilities. It's impressive to see how gVisor leverages the Linux kernel to provide sandboxing and process isolation. I read this article late because i was busy in academic writing services uk.

Your blogs are really good and interesting. It is very great and informative. Now being open-source we can expect gVisor to support more application runtimes and being portable enough so it can replace Docker's runc at some point for those interested in this additional isolation level Sex Crime Lawyer. I got a lots of useful information in your blog. Keeps sharing more useful blogs..

Dissertation Help service that any subject dissertation writing completed on time in the UK.

The GVisor is a brand-new wearable display that puts video in front of the user's eyes. This enables real-time interaction between the user and the video they are watching. The gadget will come in a range of colours and be powered by Intel. This is the nicest item I've ever seen because it makes it simple to find experts to pay someone to take my online course.

Google has recently introduced gVisor, a groundbreaking open-source security sandbox for its App Engine and Cloud Functions platforms. This innovative technology aims to enhance the security and isolation capabilities of these platforms, providing developers with an added layer of protection for their applications.

With gVisor, Google has taken a significant step towards addressing the growing concerns around application security in cloud environments. By utilizing a lightweight virtualization technique known as "sandboxing," gVisor creates a secure environment for running untrusted code. This ensures that even if an application is compromised or contains malicious code, it remains isolated from the underlying host system and other applications.

The decision to open-source gVisor demonstrates Google's commitment to fostering collaboration and innovation within the developer community. By making this technology freely available to the public, Google encourages developers to contribute their expertise and make further advancements in application security.

App Engine and Cloud Functions users can now leverage gVisor's powerful capabilities to enhance the overall security posture of their applications. The sandboxing provided by gVisor helps protect against common vulnerabilities such as privilege escalation attacks or unauthorized access to sensitive data.

Furthermore, gVisor offers compatibility with existing container runtimes like Docker, making it easier for developers to integrate this security solution into their existing workflows without major disruptions. This seamless integration empowers developers to focus on building robust and secure applications without compromising on productivity or efficiency.

In conclusion, Google's introduction of gVisor represents a significant leap forward in ensuring the safety and integrity of applications running on its App Engine and Cloud Functions platforms. With its open-source nature and compatibility with popular container runtimes, developers now have access to an effective security sandbox that enhances application protection while promoting collaboration within the developer community.

Visit Us. Pay Someone To Do My Online Course

The cliché of living in a fishbowl pertains to the diminishing sense of privacy experienced by contemporary individuals, as they constantly find themselves under scrutiny within their workplaces, recreational venues, homes, and during their travels from one location to another. Whether squeezed into congested buses or subways, ensnared in traffic jams where occupants of countless cars and trucks are exposed to one another for extended periods, the feeling of lacking personal space remains pervasive Help write my PhD dissertation. This metaphor not only alludes to the dwindling diversity of adult experiences due to prolonged hours spent in confined office or factory settings, or the mesmerizing gaze fixated on small electronic screens with an incessant flow of information, which leaves no room for reflection and contemplation of issues. It also symbolizes the notion of leading purposeless and monotonous lives, akin to being trapped inside a small, circular bowl, endlessly revolving in circles.

Great article.Abogado Trafico Fredericksburg Va

One of the advantages of gVisor is its ability to provide strong isolation between containers. Traditional containers share the same kernel as the host operating system, which poses potential security risks if an attacker gains access to the kernel. In contrast, gVisor runs each container in its own lightweight virtual machine-like environment with its own kernel implementation. Pay to take my online exam This ensures that even if one container is compromised, it cannot affect other containers or the underlying host system.

Wow, the Cisco 400-Watt Plug Power Supply for Nexus-OS (341-0375-04) is a game-changer when it comes to ensuring reliable power for Cisco Nexus devices. This power supply unit delivers exceptional performance and peace of mind with its robust 400-watt capacity. It's the perfect choice for maintaining the uptime and performance of your network infrastructure.

The article provides a detailed overview of gVisor, a security sandbox for applications, highlighting its importance in Google's infrastructure. It compares gVisor to hypervisor-based solutions like KataContainers and discusses its lightweight Go-based "Thin Virtual Kernel" architecture, highlighting its potential for enhanced security and performance. abogado litigante patrimonial

In the world of superfoods, sprouted chia seeds have emerged as a true nutritional powerhouse. These tiny seeds pack a mighty punch when it comes to health benefits and culinary versatility. So, what exactly are sprouted chia seeds, and why should they be part of your daily diet? Let’s dive into the fascinating world of sprouted chia seeds and uncover their wonders.

health benefits of chia seeds

Among our many talents, Studioawest is also a mobile app development company in USA that is dedicated to creating a complete and responsive experience available to you and your clients in the palms of your hands. Our team is extremely capable at a variety of tasks, including but not limited to 2D and 3D animation services, application revamps, and multi-OS development from scratch, with a directed focus on helping you capture new audiences while maintaining a high standard for your current one. A user-friendly and engagingly interactive interface, vibrant and branding-oriented themes, and personalized features are part of our recipe for creating a successful mobile platform.

Thanks for such an informative and useful content. mlops training

Delving into the depths of gVisor unveils a cutting-edge solution that redefines container security. With its lightweight, sandboxed approach, gVisor provides an additional layer of defense, isolating containers from the host system. Its in-depth security mechanisms make it a formidable choice for safeguarding against potential vulnerabilities, ensuring a robust containerized environment.

from: machine embroidery digitizing

In conclusion, gVisor plays a crucial role in ensuring the integrity and security of online exams. Its innovative approach to sandboxing and isolation provides a robust defense against cheating attempts while maintaining optimal performance levels. By leveraging this technology, educational institutions and testing platforms can confidently offer online assessments while protecting the interests of both administrators and online test takers alike.

Depth refers to the measurement or extent of a physical dimension, often describing the distance from the surface to the bottom or the innermost part of an object or space. In a metaphorical sense, depth can describe the complexity, profundity, or richness of thought, emotion, or understanding within a concept, work of art, or individual. In various contexts, depth is a fundamental characteristic influencing perception, analysis, and interpretation.

wills and estate lawyer near me

best personal injury attorney in virginia

The complex reasons why couples in New York may choose to file for divorce are clarified by your essay. It serves as a reminder that partnerships can present a variety of difficulties, and we value your nonjudgmental attitude. Reasons for Divorce in New York State

more affordable. Our group gives assignment help on multidisciplinary subjects and is set up to help with a academic work. Essay services UK

Depth refers to the measurement of the distance from the top or surface of something to its bottom or interior. In the context of visual perception, depth creates the illusion of three-dimensionality and is a crucial aspect of spatial awareness. In various fields such as art, photography, and psychology, understanding and utilizing depth enhances the richness and realism of representations.

virginia uncontested divorce

virginia personal injury settlements

uncontested divorce in va

uncontested divorce in virginia

semi truck accident attorney

Exploring gVisor's intricacies is fascinating! Similarly, when it comes to safeguarding your iPhone 14, delve into the details of protection with our clear cases. Experience gVisor-level transparency in design coupled with robust defense. Buy iPhone 14 Clear Cases – where sophistication meets security seamlessly

new jersey divorce lawyerThe Best article.

I appreciate the depth of research and clarity of explanation you bring to complex topics. Keep up the great work!

Searching for divorce attorneys in virginia beach? Look no further! Our experienced legal team is ready to assist you. Navigating divorce proceedings alone can be daunting and lead to unfavorable outcomes. Without expert guidance, you may risk your rights and interests.

This post did an excellent job of explaining its role in providing a lightweight and efficient sandbox for containerized applications.

Navigating divorce in Chesapeake alone can be daunting. Our chesapeake uncontested divorce lawyer offer guidance and support every step of the way. With years of experience, we've helped numerous clients achieve smooth and amicable resolutions. Contact us today for expert legal assistance and a stress-free divorce process.

I recently delved into an in-depth exploration of gVisor and found it fascinating! The level of security and isolation it provides for containerized environments is truly impressive. It's like giving your applications their own personal bodyguard, ensuring they stay safe and sound no matter what. Plus, the flexibility it offers in terms of compatibility with various platforms is a definite game-changer. Speaking of enhancements, if you're looking to enhance your confidence and appearance, consider exploring options like tummy tuck Dubai for a transformative experience that leaves you feeling empowered inside and out.

gVisor In-Depth is an authoritative resource for understanding the intricacies of gVisor, a container runtime sandbox developed by Google. This comprehensive guide delves into the architecture, functionality, and security features of gVisor, providing readers with a thorough understanding of how it enhances container isolation and security. By exploring its unique user-space kernel and how it intercepts and emulates system calls, the book illuminates the advantages gVisor offers over traditional container runtimes. It serves as an essential read for DevOps professionals, security experts, and developers who seek to leverage gVisor for creating more secure and robust containerized environments.

trust lawyer charlottesville va

trust lawyer charlottesville

gVisor is an innovative container security project developed by Google, aimed at enhancing the security of containerised environments. It operates as a user-space kernel, providing an extra layer of isolation between the host system and containerized application.

UK Embroidery Digitizing Company

Wow, your blog post on sustainable travel was incredibly enlightening. As someone who loves to travel but also wants to minimize my environmental impact, I found your tips and recommendations very valuable. Your emphasis on supporting local businesses, reducing plastic waste, and choosing eco-friendly accommodations really resonated with me.

hatta tour dubai

Thank yo for sharing this one. Keep it up! colorado springs water heater repair

Fantastic deep dive into gVisor! The way you broke down its architecture and highlighted how it provides a secure and efficient container runtime is very insightful. I especially appreciated the explanation of how gVisor operates between the application and the host kernel, minimizing the attack surface without sacrificing performance. This is a must-read for anyone interested in container security and sandboxing technologies. Looking forward to more content like this!

Embroidery Digitizing Blog

This article on gVisor dives deep into innovative approaches to sandboxing in the cloud, which reminds me of the meticulous care that Palco Specialties takes in designing UIL Theater Stage Solutions. Just like gVisor offers enhanced security in cloud environments, Palco Specialties provides robust, well-engineered stage setups for UIL performances, ensuring safety, flexibility, and quality for schools in the USA. Both bring specialized solutions to their respective fields, balancing functionality and innovation effectively.

gVisor is an advanced sandboxing technology that enhances security by providing a user-space kernel to containerized applications. It creates a secure environment for running untrusted code, minimizing the risk of threats while maintaining performance efficiency. gVisor's lightweight architecture makes it a strong choice for developers who need isolation without sacrificing speed. Just like how gVisor provides a secure and reliable infrastructure, a professional digitizer service ensures that your designs are accurately converted into embroidery-ready files. Both technologies focus on precision and quality, allowing users to achieve seamless results in their respective fields—whether it's software security or embroidery digitization.

gVisor offers an innovative approach to container security, adding an extra layer of protection by isolating containers from the host kernel. Similarly, when it comes to fashion, Gulaal Lawn by Al Karim Fabric provides a unique blend of comfort and elegance, ensuring that you look stylish while staying comfortable. Just as gVisor enhances security, the Gulaal Lawn collection enhances your wardrobe, offering premium quality and design that stand out in Pakistan's fashion landscape.

gVisor is a container runtime sandbox developed by Google, designed to provide enhanced security for containerized applications by isolating them from the host kernel. Unlike traditional container runtimes, which rely heavily on the host kernel for executing container processes, gVisor implements its own user-space kernel to intercept and handle system calls, limiting the container's access to the host system. This approach offers a significant security advantage by reducing the attack surface, making it ideal for environments requiring strong isolation, such as multi-tenant cloud platforms.

What Are the Chances of Going to Jail for Reckless Driving in Virginia

GVisor In-Depth is a comprehensive exploration of gVisor, an open-source container runtime that provides additional security by sandboxing container workloads. It acts as an intermediary between the application and the host kernel, reducing the attack surface. gVisor focuses on providing lightweight isolation for applications without the need for a hypervisor. This in-depth analysis includes its architecture, use cases, and performance considerations for securing containerized environments.

va code contributing to the delinquency of a minor

contributing to the delinquency of a minor va code

Wonderful article! Interesting, educational, and well-written. We really value the helpful details and concise explanations. I can't wait to read more tales like this one! Dubai Safari Park

Google's gVisor is an open-source security sandbox designed to isolate Linux applications. Unlike traditional container runtimes like Docker's runc, gVisor implements its own user-space kernel, providing an additional layer of security. Initially developed for Google App Engine (GAE) to address security issues in untrusted user applications, gVisor aims to minimize attack surfaces and improve compatibility with various runtimes. philadelphia immigration lawyers Lawyers are bound by a code of ethics that requires them to maintain confidentiality, represent their clients to the best of their ability, and avoid conflicts of interest.

felony prostitution A container sandboxing tool called gVisor was created to improve security by adding another degree of separation between the host system and containerized apps. Through a guest kernel known as the Sentry, gVisor intercepts and manages the host's system calls, in contrast to conventional Linux containers that engage with them directly.

If you're seeking a traffic lawyer in Arlington, it's important to note that there are cities named Arlington in both Virginia and TexasWilfred Yeargan: With 25 years of experience, Mr. Yeargan has defended thousands of traffic cases and offers free consultations.arlington traffic lawyer

The new device, known as the GVisor, is a new wearable display that displays video in front of the user’s eyes. This allows the user to watch a video and interact with it in real-time. The device is powered by Intel, and will be available in a variety of colors.

😊To excel in the pharmaceutical representative job search, start by obtaining a relevant degree and networking within the industry. Customize your resume and cover letter, highlighting your product knowledge and communication skills. Research companies thoroughly and consider informational interviews. Stay persistent and informed about industry trends to enhance your chances of securing a position.topmagazinepure

Great article! The in-depth explanation of gVisor's architecture and its approach to sandboxing is truly insightful. I appreciate how you broke down complex concepts into easily understandable sections. This is a fantastic resource for anyone looking to explore secure container runtime solutions. Thanks for sharing!

indecent liberties with a minor virginia

GVisor In-Depth is a thorough examination of gVisor, an open-source container runtime that sandboxes container workloads to add further security. By serving as a mediator between the host kernel and the application, it lowers the attack surface. virginia motorcycle accident attorney An experienced and knowledgeable lawyer specializes in motorcycle accidents in Virginia will be able to manage the intricacies of motorcycle-related incidents. These incidents frequently result in more serious injuries than ordinary auto accidents, and they could be more difficult to resolve with insurance companies or establish culpability.

Discover Sobha Orbis, a premium residential project by Sobha Realty, offering exquisite apartments with modern designs and world-class amenities. Ideally located for convenience and connectivity, this development promises a perfect blend of comfort and elegance. Whether you're a homeowner or investor, Sobha Orbis Dubai is the ultimate destination for refined living.

Sobha Hartland 2, a stunning residential community offering elegant villas and apartments amidst lush greenery. Designed for modern living, it features world-class amenities, serene landscapes, and excellent connectivity to Dubai’s key attractions. Sobha Hartland 2 Dubai is perfect for those seeking luxury, comfort, and a peaceful urban escape.

desert safari dubai packages offers many great deals and thrilling packages that you should try aswell

desert safari dubai tours packages are a really fun adventure that you should also try

try Best dune buggy Dubai ride this will be self-drive dune buggy tour in the middle of Dubai desert. most adventure tour activity in Dubai

dubai safari package is a really great adventure that offers many great deals

desert safari trip dubai offers many great adventures that you will love forsure

gVisor is an open-source user-space kernel developed by Google for container security. It intercepts and handles syscalls from containers, providing strong isolation from the host kernel. gVisor implements its own kernel in Go, reducing attack surfaces and enhancing security. It supports container runtimes like Docker and Kubernetes without modifying them.

Performance trade-offs exist due to increased syscall handling overhead.

New Jersey Federal Criminal Defense Lawyer

New Jersey Federal Criminal Lawyer

Looking for a trusted book writing service? At falcon book writing , our team of expert Falcon Writers is ready to bring your book ideas to life. From novels to memoirs, our book writing website provides personalized solutions tailored to your unique vision. Let us help you craft a compelling, professional manuscript that’s ready for publishing. Choose Falcon Book Writing for quality and expertise!

Just To Talk connects you with licensed therapists specializing in marriage and family therapy. Wondering how does talk therapy work or does talk therapy work? Our platform offers real-time sessions that help you navigate personal and relational challenges. Whether you’re seeking individual counseling or family support, Just To Talk provides expert guidance tailored to your needs. Begin your healing journey today!

NDIS Consultants act as trusted advisors, simplifying complex processes, connecting participants with providers, and advocating for their needs. They empower individuals to take control of their NDIS plans and achieve meaningful life outcomes.

Visit:

NDIS Consultant

NDIS Certification

NDIS Verification

How to become an approved ndis auditor

how to get ndis clients

how to become an unregistered ndis provider

Google created the open-source gVisor user-space kernel for container security. It offers robust isolation from the host kernel by intercepting and managing container syscalls. Family Lawyer Virginia, By implementing its own kernel in Go, gVisor improves security by minimizing attack surfaces. Without changing them, it supports container runtimes such as Docker and Kubernetes.

For container security, Google developed the open-source gVisor user-space kernel. By intercepting and controlling container syscalls, it provides strong isolation from the host kernel. Divorce Attorneys In Virginia gVisor reduces attack surfaces by using its own kernel implemented in Go, which enhances security. It supports container runtimes like Docker and Kubernetes without altering them.

Google created the open-source gVisor user-space kernel for container security. It offers robust isolation from the host kernel by intercepting and managing container syscalls. Family Lawyer New York, By utilizing its own Go-implemented kernel, gVisor lowers attack surfaces, improving security. Without changing them, it supports container runtimes such as Docker and Kubernetes.

Google created the container runtime sandbox gVisor with the goal of separating containerized apps from the host kernel to increase security. By implementing its own user-space kernel to intercept and handle system calls, gVisor restricts the container's access to the host system, in contrast to conventional container runtimes that mostly rely on the host kernel to execute container processes. Traffic Lawyer Alexandria VA, By lowering the attack surface, this method provides a notable security benefit, which makes it perfect for settings like multi-tenant cloud platforms that demand high isolation.

dubai offers many great deals and discounts that you should also try Dune buggy Safari Dubai

Definitely an enjoyable book! You raised insightful points that I hadn't before considered. What would you offer as a place to start for someone who is new to this? Your opinions of that topic will be greatly appreciated in a future post! Wrongful Death Attorney.

Our Weight Management Program at Kansas Regen Care is geared toward bringing you to an ideal weight which you will be able to maintain with the use of individual approaches in conjunction with modern medicines.

weight loss Clinic near me

The revolutionary Advanced OPD Clinic software EMR UAE for Doctors is designed to transform the patient experience.

hcloud is emerging as a top health cloud solution for doctors, clinics, and hospitals seeking digital transformation.

This technical deep-dive into gVisor could benefit computer science students studying virtualization or cloud security architectures. Those in Dubai needing affordable academic support for such complex topics might consider cheap assignment writing help Dubai to structure their technical analyses without compromising quality.

medical cloud care Care’s EMR offers a secure, cloud-based platform for seamless patient data management. It empowers healthcare providers with real-time access and improved clinical workflows.

Stem Cell near me is considered safe when performed by trained professionals using your body’s own cells (autologous stem cells). Because these cells come from you, the risk of allergic reactions or immune rejection is extremely low.

Absolutely loved this deep dive into gVisor! Your breakdown of its sandboxing capabilities and how it enhances container security was both clear and compelling. It's impressive how you explain complex topics so accessibly. On an entirely different note, after getting my fill of tech insights, I treated myself to what I’d confidently call the best chicken tikka in Karachi—equally perfectly balanced and expertly crafted! Thanks for such an insightful read.

The strong demand for rentals makes montenegro investment property an ideal choice for investors who want steady cash flow and long-term appreciation in a beautiful location.

A kitchen feels more open and inviting with modern pantry doors, as they bring together convenience, organization, and stylish finishes that match any décor theme.

Great breakdown of gVisor! Feynix Solution delivers innovative IT services, including a Lead Generation Chatbot to automate engagement and enhance customer experience.

Interesting read! They provide durable welding jackets, gloves, and sleeves that ensure safety and comfort for professionals. Strong Arm Welding

Good perspective! They supply high-quality doors, trims, windows, and flooring solutions built for modern spaces. Spire Building Supplies

Thebest hair thinning & scalp treatment clinic combine advanced solutions like PRP, stem cell, and exosome therapy with medical-grade scalp care. These treatments strengthen follicles, boost circulation, and promote natural, thicker hair growth.

Access secure, reliable, and innovative healthcare solutions with medical cloud moh

Empowering providers and patients through advanced cloud technology for seamless medical services.

Located in the heart of Downtown Dubai, the Dubai Aquarium Underwater Zoo is one of the largest and most captivating indoor aquariums in the world Dubai Aquarium and Underwater Zoo.

The “Visor in depth” article is a comprehensive guide to container security and sandboxing and clearly describes how gVisor isolates workloads and adds layers of protection for systems. As I researched this topic, I found that CIPD writing services provided considerable assistance with effectively organizing technical information and helping me ensure clarity when I summarized complicated virtualization and kernel-level concepts.

Thanks for the article, it is very helpful.⭐️⭐️🌈🌈✨✨

สล็อต

สล็อต เครดิตฟรี

สล็อตออนไลน์

สล็อต 66

สล็อต เว็บตรง

That sounds like an amazing experience! Getting firsthand insights on Clearview Window Cleaning gVisor’s history and design from the people who built it must have been really inspiring.

In Smyth County, Virginia, reckless driving is a Class 1 misdemeanor, a serious criminal offense—not a simple traffic ticket. Law enforcement aggressively patrols I-81 and local routes like Route 11, frequently citing drivers for speeds exceeding 85 mph or 20 mph over the limit. A conviction can result in a permanent criminal record, fines up to $2,500, and even jail time.

I appreciate fashion content that values timeless designs. This article explains why classic shirts never go out of style. The elegant stripes of Emily In Paris S05 Lily Collins Striped Shirt add a refined lifestyle touch. It feels versatile and wearable. The writing is engaging.

Great deep dive into gVisor internals! The article clearly explains container isolation layers and performance trade-offs. For anyone balancing tech research with academic workload, I’d also recommend TEFL assignment help services to manage tasks efficiently without stress.

LEX is a dedicated legal service provider in Pakistan offering expert guidance across various areas of law, with a strong focus on family law, civil cases, and legal support for overseas Pakistanis. https://lex.com.pk/family-law/khula-procedure-for-overseas-pakistanis/

A casino is a facility or online platform where people can play games of chance such as slots, poker, and roulette. It offers entertainment through gambling activities, often combined with dining, shows, or hospitality services. Casinos are designed to provide an engaging experience with opportunities to win money based on luck or skill.

qq2 apps

qq2 apk download

Discover Nairobi National Park, the unique safari in the city Explore wildlife, plan your visit, and enjoy layover tour services from layoverindubai com for a seamless experience nairobi park tour tips

Excellent breakdown of gVisor’s user-space kernel architecture and container isolation trade-offs. The explanation of syscall interception and reduced attack surfaces makes complex security concepts much easier to understand. Truly useful for developers exploring secure cloud-native environments and CIPD Assignment Help topics.

desert safari dubai packages & deals is one of the best and the most thrilling adventure that dubai has to offer

Evening Desert Safari Dubai Package offers many great deals and discounts that you should also try

Enregistrer un commentaire